Every learning experience starts with one question.

Who is the learner?

The learners in this project are the people who design and teach Springfield State University's graduate program in Learning and Performance Technology: faculty, program coordinators, and curriculum decision-makers. They are experienced professionals who care deeply about graduate outcomes and take seriously their responsibility to prepare students for real workplace demands. They have built a program that is nationally ranked, fully online, and grounded in authentic, team-based client work. What they needed was a clear, evidence-based picture of whether their curriculum's expectations for communication and collaboration still matched what the field was actively hiring for.

Why does it matter to them?

A program review happens every three years. The question it has to answer is honest and relevant: has the world moved faster than the curriculum? If workplace expectations for collaboration and communication have shifted and the program hasn't kept pace, graduates feel that gap first, in interviews, in early roles, in moments where they weren't prepared for something they should have been. The program's reputation, enrollment, and relationships with industry partners all rest on well prepared graduates being the norm. This needs assessment was designed to find out whether a gap existed, and if so, where.

Performance Gap and Design Rationale

The gap wasn't about whether the program taught communication and collaboration. It did. The gap was about clarity and consistency. Course materials referenced effective collaboration but rarely defined what it looked like in observable, behavioral terms. Students could complete a semester-long team project, hit all the deliverable deadlines, and still not have received explicit guidance on handling conflict, giving feedback, or adapting their communication style under pressure.

Chevalier's updated Behavior Engineering Model framed the analysis. It pushed the team to look at the environment first before drawing conclusions about individual students, which turned out to be exactly right. Portfolio data showed students graduating at 95.9 out of 100 on communication and collaboration outcomes. The issue wasn't student capability. It was that the performance environment, the expectations, tools, and feedback structures around team-based work, wasn't consistently set up to develop the specific workplace-relevant behaviors employers were asking for.

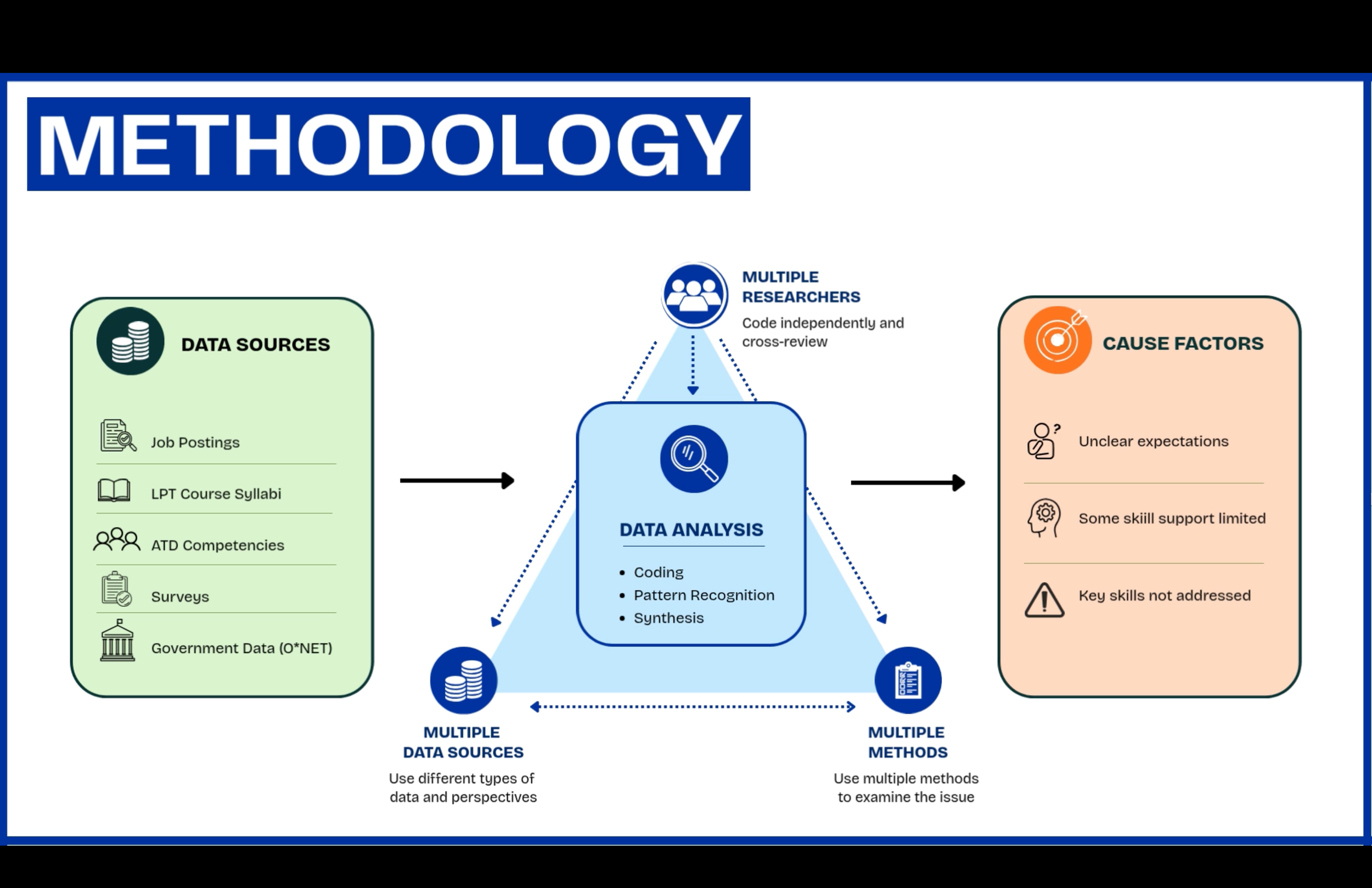

Data came from four external sources: job postings on LinkedIn and Indeed, ATD's Talent Development Capability Model, O*NET occupational data, and a practitioner survey of working L&D professionals. Each source was coded independently by multiple analysts and cross-reviewed. The synthesis revealed four key findings that shaped the recommendations.

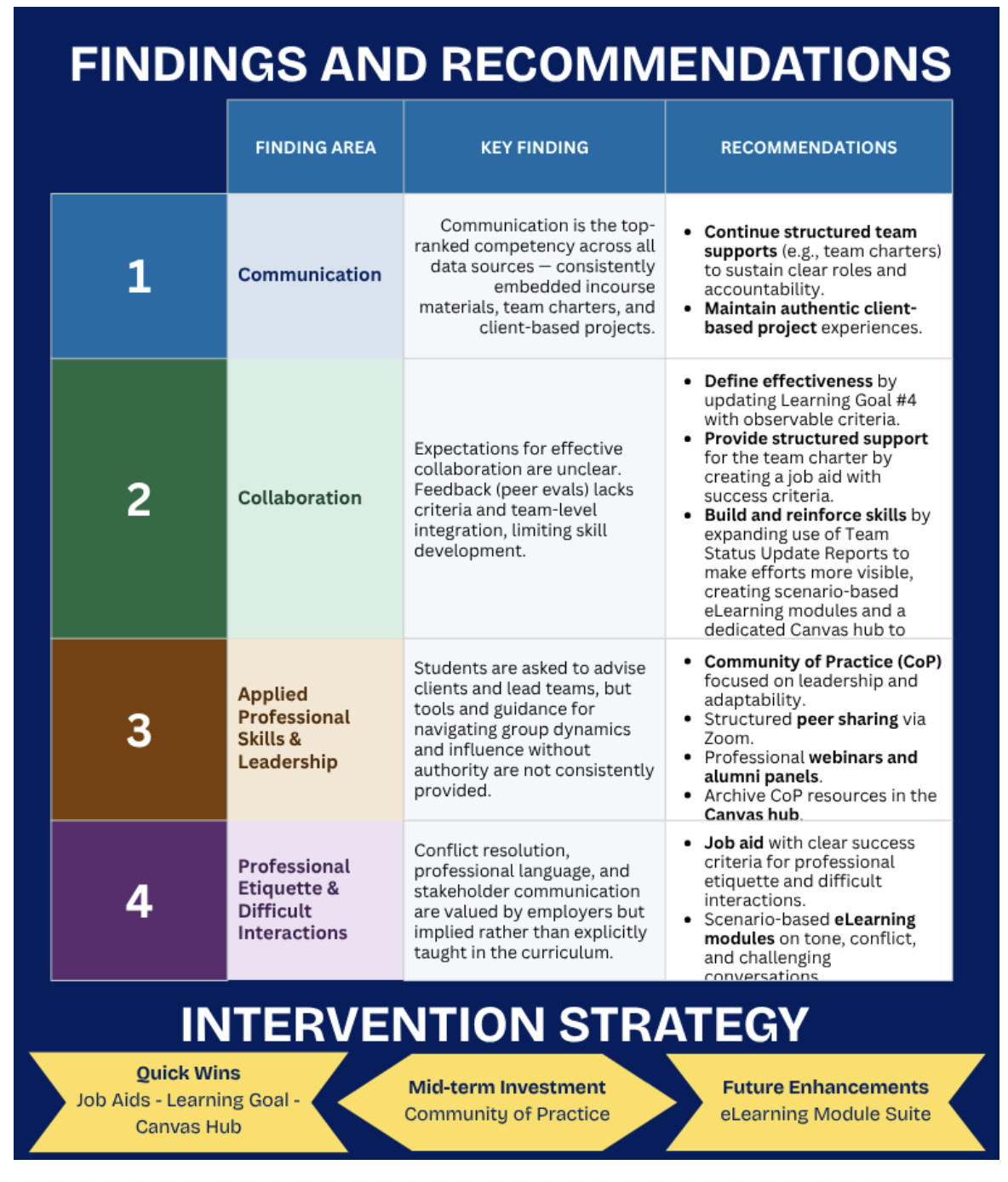

Key Findings

Finding 1: Communication is a strength. The program has a strong, consistent foundation in written, oral, and visual communication. Clearly and intentionally woven throughout the curriculum, well-supported by structured activities and authentic client projects.

Finding 2: Collaboration needs clearer expectations. Collaboration is valued and practiced, but not always explicitly defined. Students aren't always shown what effective collaboration looks like, how it will be evaluated, or how to develop those skills across the arc of a project. Peer feedback mechanisms tend to lack clear criteria and aren't consistently connected to reflection or skill development.

Finding 3: Applied professional skills need more scaffolding. Students advise clients, lead team dynamics, and navigate conflict. But the tools and guidance to do those things well are inconsistent across courses. The concepts are introduced. The structured support during the moments students most need it isn't always there.

Finding 4: Some critical skills aren't explicitly taught. Conflict resolution, professional language, and giving and receiving feedback were named by practitioners as key differentiators between high and low performers. They're largely implied in the curriculum rather than directly taught.

Intervention Strategy

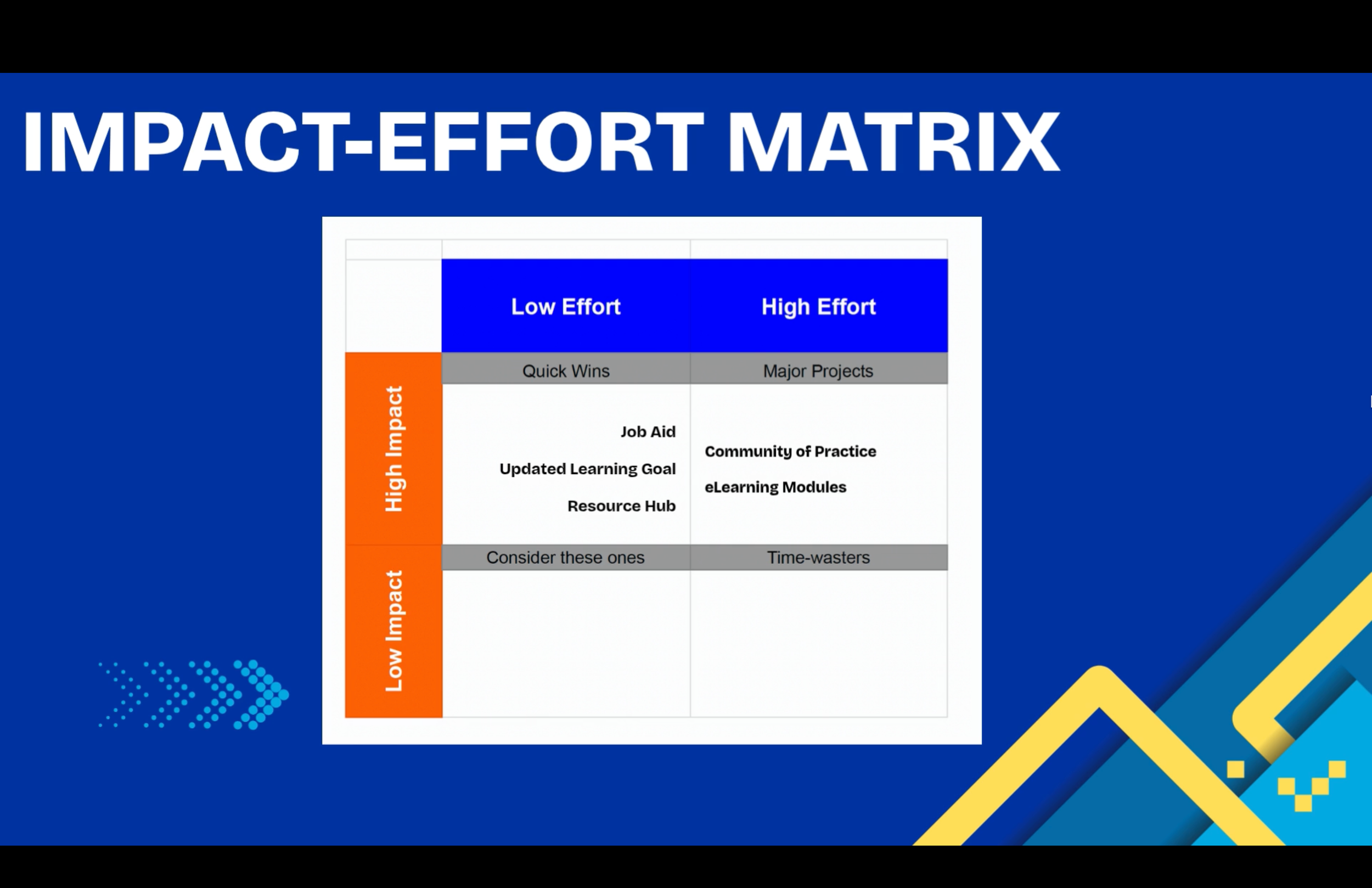

Recommendations were prioritized using a Multicriteria Analysis and Impact/Effort Matrix, separating quick wins from longer-term investments.

Quick wins: updating the program's collaboration learning goal to include observable criteria for effective collaboration, creating a job aid embedded directly in the team charter template, and building a centralized hub for communication and collaboration resources. High impact, low effort, implementable immediately.

Mid-term: a Community of Practice focused on leadership and adaptability in team-based work. Future enhancement: scenario-based eLearning modules on communication and collaboration techniques, higher development lift but strong potential impact once the foundational pieces are in place.

Reflection

My role on this team was Instructor Liaison, which meant I was one of the primary contacts between our team and our client, a faculty member and program coordinator. That position taught me something I didn't expect: how much of needs assessment work is relationship management before it's analysis. Keeping a client informed, calibrated, and trusting the process is its own skill set, and one the field names explicitly in job postings for a reason.

The finding I keep coming back to is the one about individual versus environmental causes. Students were graduating with near-perfect portfolio scores. By every internal measure, the program was working. But the performance environment, the clarity of expectations, the consistency of scaffolding, the specificity of feedback, wasn't fully set up to develop the behaviors employers were actually looking for. That distinction matters enormously for how you design an intervention. Misread an environmental problem as an individual one and you design training when what people actually need is a clearer job aid, a better rubric, or an explicit definition of what "effective" means.

“The gap wasn’t about whether the program taught collaboration. It was about whether the environment was consistently set up to develop the specific behaviors employers were hiring for. That distinction changes everything about how you design a solution.”